Anthropic CEO Dario Amodei was still arguing about Claude’s red lines in Washington, DC, when Claude he went to war. A few hours after the US Department of Defense terminated its contract with Anthropic, US officials may use Claude in their airstrikes against Iran. Weeks later, how and in what capacity still unknown.

Pentagon’s continuous column and Anthropic is the most visible symptom of further suffering. In the past months, a group of AI ethics and safety experts have been he resigned from top AI companies. They weren’t the first, and likely won’t be the last. And if resignation continues, the future of AI will be decided without people who care enough to mitigate its risks.

For most people, the risks of AI are often remote—the kind of risks that we assign to experts in the same way that we expect aeronautical engineers to think about plane crashes so that we don’t have to.

The spectrum of AI risks is new, and the community of professionals who will manage it is still taking shape. Nuclear experts have been here before. And their history offers a lesson: put a dissenting voice in the room or risk a class of fractured professionals whose motivations don’t align with the public’s.

In interview and for employeesAmodei incessantly recommends one book: Richard Rhodes’s almost-900-pages The Making of the Atomic BombPulitzer Prize-winning account of the Manhattan Project.

It is not a text of victory. Rhodes documents the repeated failure of scientists to control how their new technology would be used. Physicists Leo Szilard and James Franck-and even J. Robert Oppenheimer— tried to impose conditions on the use of the bomb, arguing for international control of nuclear technology. Their efforts were overtaken by the demands of the emerging Cold War.

Those who made their peace with the new concept of national security, such as John von Neumann and Edward Teller, joined a new elite class, the “nuclear priesthood.” They developed new techniques and technologies such as game theory and advanced computing. They spoke their own minds”technologically” language and make sense of nuclear warfare, escalation, and deterrence outside the scope of public scrutiny. Dissident voices that diverged from the nuclear code—proponents of disarmament, small arms, or even different deterrence positions—were removed from centers of power and planning. Oppenheimer, Bernard Brodie, Daniel Ellsberg—dissidents were discredited, sidelined, or ignored.

The subject that Amodei and others seem to draw from Rhodes is one of the unexpected results: The scientists who created the technology of change often failed to control it. But AI advocates may also be drawing another unexpected and dangerous lesson from this history. Despite the unheeded warnings of Manhattan scientists, the massive buildup of weapons, and superpower confrontations that often turned violent, no leader has used nuclear weapons in war since the United States attacked Japan in 1945. Perhaps this means that the system worked without requiring resistance. Even if Amodei is not overly assured, some AI researchers may be reading nuclear history for a false source of comfort.

There is a catch. Historical research on local calls–lies warningsnuclear weapons unfortunately dropped from the plane, lost at sea, shipped by mistakeand conflicts that are about to increase out of control-suggests that nuclear war was just a long way off, again and again. The nuclear record thus suffers from a survivalist bias where the apparent success of nuclear policy in preventing nuclear war discounts the important role played by luck.

This record has the catastrophic potential of nuclear weapons, mentally extinguishing the horror of nuclear war in the background. The worst dangers of artificial intelligence, like those of nuclear weapons or engineered parasites, seem remote. It is hard to imagine and seems to happen somewhere else or to someone else—if we believe it will ever happen. This is a problem of psychological distance, the personal feeling that the threat is in the village from self, here, and now.

So we rely on communities of experts—”clergy”—to remember distant dangers because the public cannot. We are too consumed by our daily worries or distracted by the sales pitch of the news cycle. We believe in the priesthood to keep us safe, and distance enables these groups to capture and control danger largely outside the public eye.

But the priesthood may develop different interests and face different motivations than the public. When those interests diverge sufficiently, the priesthood becomes problematic—as when they sacrifice safety in pursuit profit or prestige.

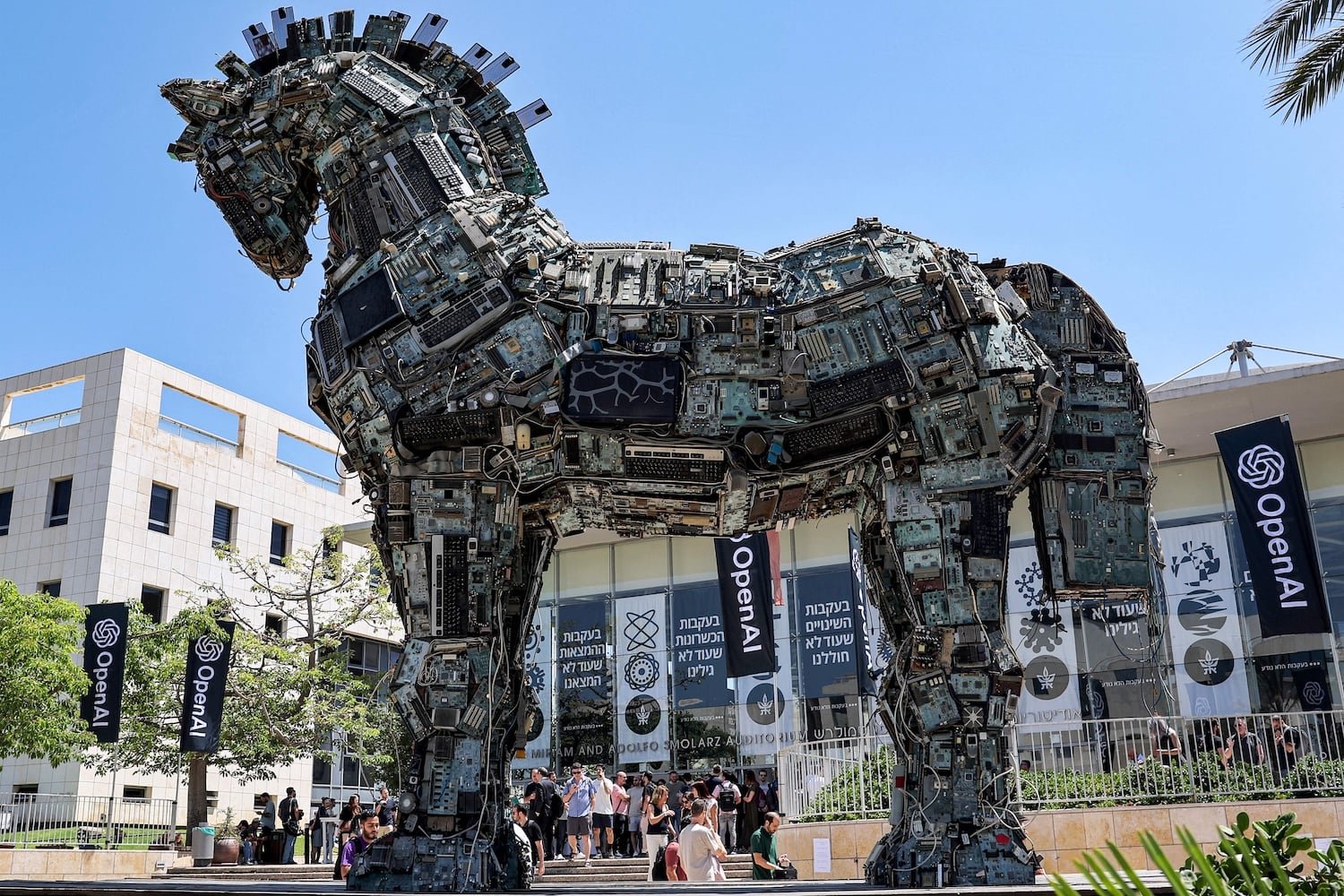

Resignation of AI security researchers from Anthropic and OpenAI do not bode well for the emerging AI priesthood. The competitive logic of the AI race may be doing to them what it did to the rival scientists of the Manhattan Project. AI companies will show them the door, pay lip service to their concerns, and build bigger ones. Market incentives may be stronger than Cold War competition in silencing opposing voices. Geographical incentives push in the same direction for nesting competition among US AI developers within a big with China. The speed and scale of development of the frontier model means that efforts to regulate AI from above, such as the EU The Law of AI or the United Nations’ International Dialogue on AI Governancealways appear several steps behind.

Forward competition has sometimes been presented as a security strategy: Companies on the frontier can discover and fix design flaws by releasing them, step by step, into the world and. see what happens. But the logic of the race-to-safety assumes that structural failures are recoverable and clearly visible. AI”black box” outcomes are ambiguous in ways that make failure difficult to relate to and difficult to learn from.

The AI priesthood is thus being created under strong winds. Controlling AI may be a bigger challenge than controlling nuclear weapons because of their perceived risks everywhere: the deployment of discrimination, mass surveillance, the killing of freedom, and even great intelligence “killer AI” are all on the AI security agenda. This breadth of domain, and commercial pressures to compete, make risk all too easy to postpone.

Moreover, nuclear weapons may have changed international relations, but they are ultimately very large bombs limited context of use. AI, by contrast, has a broader context of use to which it has been equated electricity and water.

Good news is that if the nuclear example shows what can go wrong, other industries – especially those with frequently used, safe, reliable, everyday technology – can show what can go right. The history of aviation, for example, suggests that permanent security architecture is never voluntary nor imposed from above. They emerge from the complex interactions between accidents, claims, engineering skills, organizational change and regulatory pressure. Aviation safety improved over time in part because one plane crash, such as runway collision at LaGuardia on March 22, has a low individual impact on the overall industry and therefore allows aviation security experts to find solutions to problems. after it happened.

Regulatory pressure on AI has been tumultuous so far, but the emerging record points away from safety. In 2025, the White House of President Donald Trump to be revoked the Biden administration’s 2023 executive order on AI and narrowing the mandate of his security establishment from broad security oversight to national security and competition, even. remove “security” from the name of the institution. In the middle of March, it is revealed the light touch of the national legal system appeal to Congress “preempting government AI laws” that conflict with his priorities.

The White House and industry representatives have criticized the European Union The AI Act of 2024 by what they see regulatory abuse as Europe’s AI talent continues move to another place. A governance gap has emerged instead of the big AI deal: Where regulation has teeth, AI investment moves; where it moves, regulatory oversight decreases. This regulatory asymmetry can win the interests of the AI priesthood more and more from the public.

What full AI security management may unfortunately require is a disaster that closes the distance and spurs action. This could be a clear, real-like disaster space collision in the Grand Canyon that paved the way for the Federal Aviation Administration—that is both publicly visible and dramatic enough to make the effects of AI feel close to home. Without such a stimulus and the sustained pressure that follows, the costs of procrastination are more bearable than those of caution.

The failures of AI so far remain separate and likely, their consequences distributed in millions of interactions rather than concentrated in single residue sites. They are still more alike events than accidents: single-part errors that, although causing harm, can be corrected after the fact. This reduces pressure from the outside to put more security measures in place for AI, and makes the security situation harder to do internally, too — especially given the engineers’ incentive to build better, faster and more powerful, before more security.

AI also has another security problem: speed. Aviation had a period of miniature failures before it flourished. In the late 1920s, airplane accident rates were high enough that, if applied to today’s airline industry standards, nearly ten thousand people would die a year. By the 1950s, aviation safety had improved: about a hundred deaths a year in the United States in the industry. 35 times smaller than today. But the 1950s rate would still result in thousands of deaths a year at today’s rate – rather than the few hundred recorded in 2025.

The widespread, rapid and multi-domain adoption of AI makes it a challenging security case. Now, its potential side effects begin bad medical advice for the end of humanity. The greater the security challenge, the greater the need for creative thinking. AI companies that are serious about security and regulation will need to find ways to keep the opposing voices in the room—the hard-hatted devil’s advocates—and shield them from the pressures that push them out.

AI companies may also have irrational incentives to do so. Companies that are guided by external principles—that treat safety and ethics researchers as constructive critics rather than internal threats—can bring safer products and better reputations to market. After OpenAI signed the Pentagon contract instead of Anthropic, Claude got big promote popularitytopping its rival for the first time in the US app store rankings.

During the Cold War, the US government silenced anti-nuclear experts, and the world survived. If global consumers don’t want to gamble with AI, they should push companies to take a more responsible approach to security.